To continue defining a subcognitive architecture for an organism, the inputs to the system must first be clarified. These inputs arise from the sensory systems that feed into the brain. By brain here, I am primarily concerned with the portions of the brain that enable conscious thought, including the subconscious systems that provide the primitives on which conscious thought operates. My goal is to outline a computational model of human-like cognition that could serve as a controller for a robotic system. This goal is distinct from other possibilities, such as creating a neurobiological model to drive neuroscience research or to explain human behavior. Although I do hope to provide some insights that could inspire ideas for neuroscience, my focus is more on identifying the rudiments of what makes a cognitive system work to support the design of analogous embodied robotic systems. And the first step in doing so is to model the sensory inputs, which I will attempt to do here.

Approximating Cortical Topology

Each hemisphere of the neocortex is roughly a large flat sheet crumpled up and stuffed into the skull. The sheet consists of six layers (laminae) and can be loosely divided into numerous cortical regions such that there is dense connection within regions and sparser connections between regions. Interregional connections are realized by bundles of axons that lie beneath the sheet and can reach quite far across the cortex. These regions are perhaps the most obvious foundation for mapping out a modular architecture of the cognition. The best known scheme of such regions is the map of Brodmann areas, outlined in 1909, that subdivide the neocortex into about 50 regions based on the density, size, and number of neurons in each region.

Each cortical region takes input from other regions or from outside the cortex and sends outputs similarly; these inputs and outputs are typically associated with specific laminar layers, but the pattern of computation within each region is not yet generally understood.

For mathematical purposes, I will abstract each region as a continuous function that transforms an input tensor into an output tensor. A n-tensor is a collection of numbers that is organized into n dimensions, with each dimensional span called an axis. So a scalar number is 0-tensor, a vector is a 1-tensor, and a matrix is a 2-tensor. Modern artificial neural networks operate on tensors, and the software libraries used in these neural networks support tensors with arbitrary dimensions. Each entry in the tensor represents an artificial neuron.

I will use a 3-tensor to represent cortical inputs and outputs. The first two axes will represent the spatial layout of the cortical sheet, and the third axis will represent a kind of information bandwidth, the number of features passed to the region. The spatial dimensions will be used in adaptive ways across regions, for example, to model space in vision and frequency in hearing. The number of features will likewise vary from region to region, but will not typically have a spatial component.

Entries in the tensors represent the neural activity coming into or out of the layers. I will model this activity as being a number between 0 and 1, with zero representing a minimal firing rate and one a maximal rate. In point of fact, neural firing rates are non-linear functions of the input because they reach a saturation point determined by the chemical and physical structure of the neuron. These nonlinearities are not as simple as the functions used in artificial neural networks, but they are present nonetheless. A firing rate is dynamic value and hence is always a temporal average. Actual neuron firing is discrete, but the averages over time are more continuous.

Thus I adopt a modular architecture consisting of components that are functions from 3-tensors to 3-tensors, with all numeric values between zero and one. I do not claim that this abstraction correctly models neural anatomy in any realistic way; rather, it captures just those aspect of neural anatomy that I have chosen to model and potentially obscures or misrepresents others. But I think the choices above will prove reasonable for my purposes, and if not then I will revise them.

All senses that are consciously accessible are mapped onto the cortex. I will assume that they are uniquely mapped to a single region in each hemisphere and so define a sensory input as a special region whose neural activity is determined external to the neocortex. I will not stress over the details of this definition for the time being, because I am modeling something more like a robotic controller rather than faithfully representing neuroanatomy.

Each sense, then, will need to produce one or more sensory inputs to the neocortex, and these sensory inputs will be modeled as 3-tensors with values between zero and one. Now we will look at how these values can be derived in each sense, and what principles these inputs should follow.

Sensory Classes

With this basic model of cortical regions and sensory inputs, we can now look at the individual senses in turn. Let us separate the senses into a few classes. I will not attempt to model all human sensory inputs, but merely a sampling of those whose relevance seems critical to the subcognitive architecture I am describing.

The first class includes those senses that detect stimuli at a distance. I will call these ranged senses, and they include vision, hearing, and smell. The second class includes senses that detect stimuli in contact with the body. It will be convenient to think of this class as having only one member, namely touch, and it will be referred to as the contact sense. The third class of senses are internal to the body and provide information about the body’s internal state. These senses are numerous and diverse. The most important of them for my purposes are taste, balance, and proprioception (the state of the joints), but there are also senses throughout the gut and bowels that I will mostly ignore. This class of senses I will name the internal senses. A fourth class of sensory inputs to the cortex include the cortical mapping of the outputs of other organs within the brain, such as the amygdala and the hypothalamus. I will call these the subcortical inputs, to avoid calling them senses, and I will ignore them for the time being. The other three classes — ranged, contact, and internal — play different roles within the perceptual system and thus should be handled distinctly.

In addition and somewhat orthogonal to those listed above, there is a special class of senses that detect environmental rewards and punishments. These include portions of the senses of taste, smell, and touch. These reward stimuli are managed outside of the neocortex, and so they are not sensory inputs as I have defined them. But they are inputs to the overall architecture of the mind that are critical for learning, and we will return to them eventually. For now, I will describe the first three sensory classes in turn.

Principles of Ranged Senses

Ranged senses serve the primary purpose of localizing of percepts in space away from the body, and hence share several properties.

They detect stimuli within a particular spatial extent, which I will call the perceptual extent.

They are paired with left and right, and the pairing allows for triangulation of position.

They sense a specific, enumerable list of qualities, and within each sensed quality they also provide a measure of quantity.

They are dynamic, perceiving changes over time, and the senses are more or less tuned to detect such changes and from them to build a model of motion.

We will review these properties in turn.

Perceptual Extent

In order to sense stimuli across its perceptual extent, each ranged sense is endowed specialized detectors. The position of a percept within the perceptual extent can then be inferred from the activation of these detectors. This inference is not necessarily precise. For smell, the extent of detection is quite large, extending spherically out from the nostrils in all directions, but the numerous scent detectors in the nose are oriented towards identifying types of smells more so than position. Roughly the same statements also hold for hearing, with some obvious differences. In contrast, the perceptual extent for sight is not spherical, but spreads out as two cones emanating from each eye in the direction the eye is pointing. One of the tasks of the downstream perceptual system is to map the perceptual extent of the various senses together into a single coherent map of the world.

Stereo Senses for Localization

The left and right pairing of ranged senses enables approximate three-dimensional localization. Without this pairing, neither smell nor hearing could localize stimuli, and sight could only localize in two dimensions. But paired nostrils mean that there is potentially a difference in odor intensity between left and right, and the strength of the difference indicates position of the odor on a one-dimensional axis stretching from left to right. By moving the head around, a second dimension can be added. Moving the body in space adds a third dimension. The exact same analysis holds for hearing. Sight is special because it perceives three dimensions all at once; the two-dimensional visual fields can be used to triangulate and so approximate depth. In all cases, the perceived location of objects out in the world is approximate, but by aligning multiple senses, this error can be significantly reduced. Localization does not only happen in the neocortex; significant processing of the paired input signals occurs in subcortical organs such as the thalamus and the superior olives prior to reaching the primary sensory areas.

Detecting Quality and Quantity

Each sense provides an array of detectors. Sight has four: rods to detect contrast (light/dark), and cones to detect roughly red, yellow, and blue. The rods and cones are not equally distributed across the perceptual extent; in particular, there are more rods than cones in the periphery and the retina also has a blind spot where the optic nerve attaches. For smell, the detectors are numerous and discrete, with olfactory receptors differentially matching several hundred different types of molecules passing through the nasal airstream. Actual smells are perceived as a pattern of responses in these receptors, much like a high-dimensional embedding in current AI systems. In hearing, the cilia vibrate in response to different frequencies along the cochlea, so that the detected qualities are laid out in the frequency dimension, producing a discrete Fourier analysis of the incoming sounds. In every case, the detectors can also sense magnitude, with neurons firing at a higher rate when exposed to greater stimulus.

Dynamic Motion Detectors

Changes in the egocentric position of stimuli over time are reflected in changes in the firing rates of the various detectors. Because understanding motion is critical to the survival of the organism, the senses are tuned to detect these changes. Within vision, for example, there are ganglia in the retina itself (X, Y, and Z cells) that detect moving objects. Subcortical neurons further enhance such detection so that once the sensory input reaches the cortex, motion information can be readily extracted.

The Contact Sense

The sense of touch differs from the ranged senses in that it primarily serves to localize sensations on the body rather than out in space. The contact sense can localize directly, and hence does not require paired sensors. Its perceptual extent is the whole surface of the body, and inside the body as well to some degree. But the other two design principles of sensory organization — detection of distinct qualities and sensation of the dynamics of motion — remain pertinent.

Like the ranged senses, touch has several differentiated detectors. Some sensors detect mechanical pressure and include fast-responding and slow-responding variants. Based on the interplay between the two, our brains can differentiate pressure, rubbing, vibration, repetitive tapping, and more. Neurons at the base of body hair detect objects in close proximity. Other neurons respond to temperature changes (thermoception), and yet other neurons respond to different forms of pain (nociception). The activity of these somatosensory neurons is aggregated and processed locally, so that neural coding from the skin already detects basic patterns of motion on the skin.

Internal Senses

The main internal senses of concern to this discussion are balance and proprioception. Properly speaking, the vestibular system for balance is part of the organs of hearing, and the neurons responsible for proprioception are part of the somatosensory system. But the properties that balance and proprioception detect are global and are mainly needed to modulate other systems.

The sense of balance is based on a set of three tubes filled with fluid that detect the level of the head in each of the three major coordinate axes by the movement of hairs within the tube. Next to these tubes are two small organs that detect acceleration, allowing the brain to distinguish between head orientation and movement, again demonstrating the principle of dynamics in the sensory system, as well as the principle of qualities (namely, three values for orientation and two values for acceleration). The vestibular system has its own primary cortical region (the parietoinsular vestibular cortex) but also feeds into associational areas for vision and proprioception at the junction of the temporal and parietal lobes.

Proprioception is part of the somatosensory system. There are nerves throughout the body that detect compression in muscles, tendons, and joints. Following the principle of dynamics, aggregations of neurons can detect the speed of movement as well. These neurons provide rich information about the position of the body in space, and they have their own dedicated pathway through the spinal cord. Proprioceptive information is processed subconsciously in the cerebellum and elsewhere, but the conscious sensory input for proprioception goes to the primary somatosensory cortex, where touch is also processed.

Proprioception and balance are critical to the subcognitive architecture I am developing, because they determine how to integrate the contact sense with the ranged senses in order to populate the egocentric spatial map.

Mapping Senses to Tensors

Each sense maps to paired cortical regions, one in each hemisphere of the brain, called the primary cortex for the sense. As suggested above, the mapping is not direct. Vision, hearing, touch, balance, and proprioception are routed through the thalamus where they are preprocessed before being sent to the primary sensory cortices, respectively. The olfactory (smell) system is evolutionarily older and connects in complex ways that are difficult to summarize succinctly; it even has its own cortices separate from the neocortex. Nonetheless, there are at least a few principles that can be extracted for how senses are mapped to cortical regions:

Lateralization: Each hemisphere individually receives all sensory information relevant to the side of the body that it manages. For the ranged senses, this information requires cross-lateralization of the paired sensors so that both sides of the brain can localize stimuli individually.

Two-Dimensional Layout: Information from the senses is coerced into two spatial dimensions in the cortex. These dimensions represent qualities specific to the sense in question, which are not necessarily spatial in nature. The dimensional layout is often referred to as a topographical map in neuroscience literature.

Features: Each sense encodes information about several properties into neural firing rates that can be represented as a time-varying vector.

We will now consider how these principles can be applied to each senses in order to choose tensor representations that model sensory input.

Vision

The visual pathway from the eyes passes along the optic nerve to the thalamus, specifically the lateral geniculate nucleus, where visual fields are split into left and right halves for each eye, implementing cross-lateralization. The left halves of the visual field are sent to the primary visual cortex (cortical region V1) in the right hemisphere of the brain, and the right halves are sent to the left hemisphere. This cross-lateralization is performed because the right hemisphere controls the left side of the body while the left hemisphere controls the right. Each side needs complete information to direct its sphere of control.

The primary visual cortex uses a retinotopic layout; that is, neurons are laid out like pixels in a photograph according to how the rods and cones are laid out on the retina. At each “pixel”, various features are available, including activation of rods and cones, but also some rudimentary information about motion at that pixel. These features are doubled in that there is partially aligned data from both eyes available.

Image taken from Brewer & Barton, 2012 Figure 2.

Note that the arrangement of the retinotopic neurons is best understood using polar coordinates, as shown in the image above. That is, there is a dimension representing distance from the center point of the retina, and another dimension representing the angle from the center.

As a model, we can suppose that visual input is a 3-tensor of shape (R, A, 2c), where R is the radial resolution (eccentricity) and A is the angular resolution of the retina. This resolution would not uniformly represent different radiuses, but would devote more resources to the fovea at the center. The final dimension c represents the number of available features, which I might attempt to enumerate as contrast, red, green, blue, plus additional pairs of up-down and left-right movement for each of these, yielding c=12. The final dimension is doubled to 2c to represent the inclusion of both left and right visual fields, as implemented in occular dominance columns.

The actual primary visual cortex does not exactly match this description, but the model is sufficient in any case. If the enumerated features are incomplete or incorrect, then we can simply swap in the correct features into the model. If feature detectors are not evenly distributed across the region input, as for example rods and cones are not, then we can interpolate smoothly across the input area to fill in our model with reasonable features.

Hearing

The hearing pathway runs along the auditory nerve from the cochlea through the superior olivary nuclei to the medial geniculate nucleus of the thalamus. Along the way, these subcortical organs compute differences in timing and intensity at each frequency across the input from the two ears (interaural differences). The primary auditory cortex (A1) in superior temporal lobe of each hemisphere of the neocortex then receives this processed information.

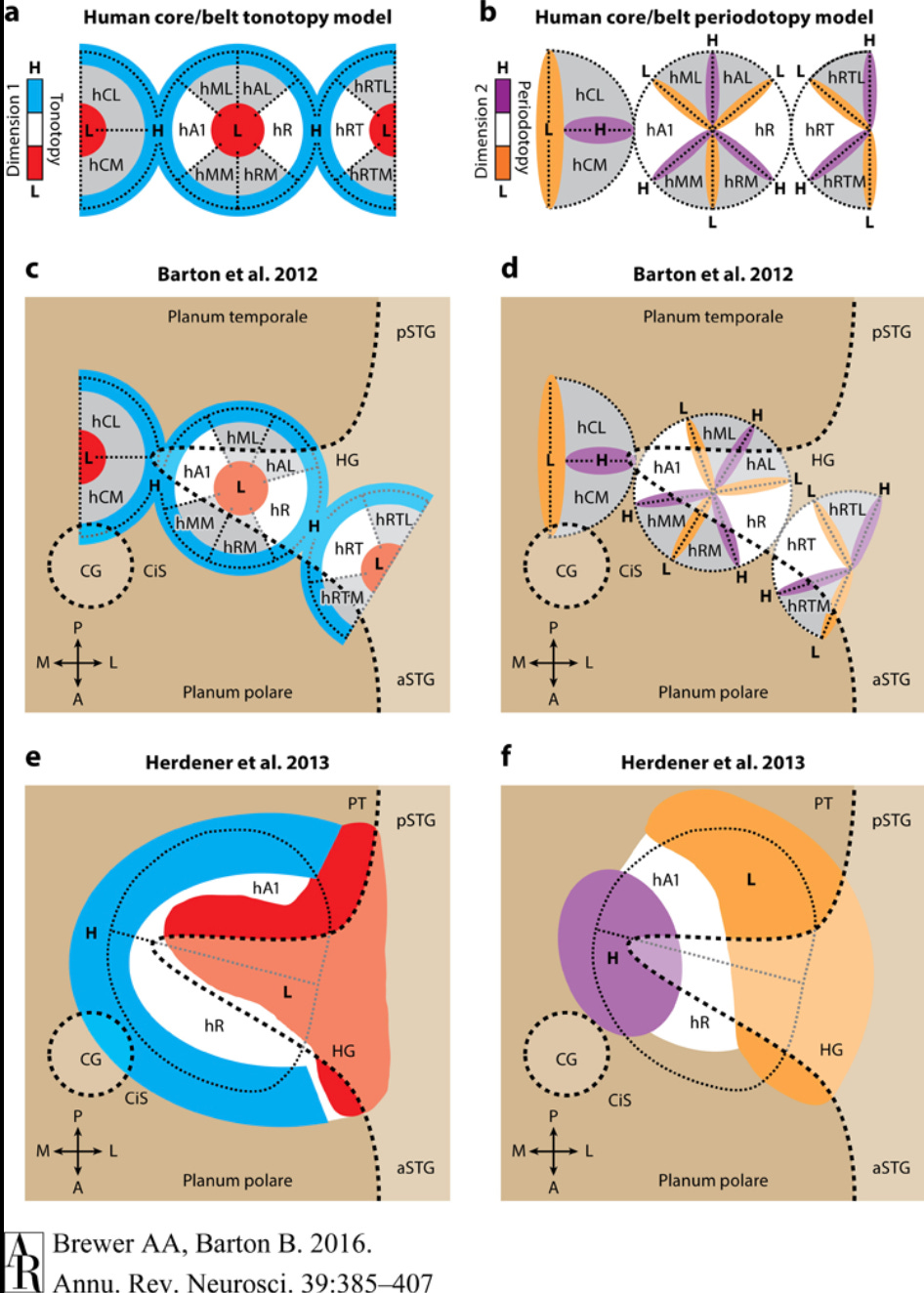

The spatial layout of the primary auditory cortex is tonotopic in one dimension and periodotopic in the other dimension (Brewer & Barton, 2017). Tonotopic arrangement means the neurons are laid out along the frequency dimension, from low frequencies to high, and periodotopic arrangment means that an orthogonal set of neurons detect information relating to periodic amplitude variations in the other dimension.

Image taken from Brewer & Barton, 2017, Figure 5.

The term periodicity here can be be confusing because it does not refer to the periodicity of the sound wave itself but rather to the periodicity of the amplitude of sound considered as a wave in its own right. For example, if I play a scale on a clarinet versus the same scale on a piano, then the frequencies will be the same, but the pattern of sound amplitude will vary between the instruments. The piano will have a fast onset and fast decay of amplitude, whereas the clarinet with have a slower onset and a more sustained amplitude thereafter. These are the kinds of distinctions represented in the periodotopic dimension of sound.

One quick note about periodotopy. In human speech, there is a fundamental frequency of the voice that comes from the anatomy of the speaker, which individual sounds are detected through frequency ratios against this fundamental frequency. By extracting both amplitude modulation (the fundamental) and raw frequency (the speech formants) from hearing, the auditory system can readily perform source separation to identify individual speakers and isolate their speech. This problem is far more difficult if only the raw frequencies are available.

Cross-lateralization of sound is reflected in a series of alternating stripes of two kinds. The first kind (EE) is excited when a frequency is detected in both ears, and the second kind (EI) is excited when a frequency is detected in the ear corresponding to the hemisphere and inhibited by the same frequency in the other ear. Thus activity in EE indicates that a sound is heard on both sides, whereas activity in EI indicates it is heard only on one side. The change of activation between these two indicates movement of the stimulus relative to the body and can be used for localization.

In addition to tone, period, and lateralized information, it is also likely that the primary auditory cortex receives information about gradients in frequency and amplitude, given that such information is processed earlier in the auditory pathway.

Therefore, we can tentatively model the sensory input for hearing as a 3-tensor with shape (f, p, a) where f ranges over raw frequencies from low to high, p ranges over amplitude modulation frequency, and a ranges over enumerated features including EE and EI and their gradients.

Touch

The primary sensory cortex (S1) receives input from touch sensors throught the spine. Like most other senses, this input first passes through the thalamus, where certain sensory inputs can be selectively inhibited. In extreme situations, for example, the thalamus can block pain at this level so that it is not consciously experienced, as happens in cases of psychological shock or when an athlete plays through a game despite an injury.

The spatial layout of sensory cortex is somatotopic, meaning that the spatial layout of this cortical region essentially takes the skin and stretches it out onto a two-dimensional sheet in a way that most positions that are close to each other on the body are also close within the cortical mapping (Willoughby, Thoenes, and Bolding, 2021). The mapping is not purely topological; the surface is “torn” so that the mapping unwraps the skin from the body, which means, for example, that if someone traces the circumference of the arm then neighboring neurons in the cortex are excited until at some point the excited neuron jumps discontinuously to the other side of the cortical region. In addition, the inside of the mouth is mapped to its own region, as are the gut and the genitals.

Illustration from Anatomy & Physiology, Connexions Web site, Jun 19, 2013.

Unlike with ranged senses, touch is purely lateralized with no cross-lateralized mapping; the left side of the body is mapped to the right primary sensory cortex and vice versa.

At each somatotopic position, all of the various sensors of the skin are available, including pressure, vibration, direction of movement for pressure, proximity and direction of movement of the cilia, and pain. These form the features of the tactile system.

Thus we can model touch as a 3-tensor with shape (k, m, l) where k ranges from medial to lateral in the cortex and represents progressively from the genitals to the toes, feet, legs, torso, arm, hands, head, tongue, and throat, m ranges from anterior to posterior and roughly represents the circumference of the body, and l ranges over the discrete features of touch. In reality, the spatial map is not perfectly dimensionalized, and we should imagine instead that each spatial coordinate (x,y) for 0 < x < k and 0 < y < m, the spatial point (x,y) represents a specific point on the body, allowing different places on the body to have relatively larger or smaller representation in the model as needed (as in fact the hands and mouth both have outsized representation).

Smell

The sense of smell is archaic in an anatomical and evolutionary sense, meaning that it breaks all the rules. Hundreds of distinct neural receptors in the nasal passages detect various molecular signatures trapped in the mucus from the airstream. These receptors are distributed through the nostrils but are aggregated into glomeruli by type in the olfactory bulb, which does have a certain spatial arrangement for odors (called chemotopic). This spatial arrangement is not preserved, however, as the sense of smell passes from the olfactory bulb into the cortex (Giessel & Datta, 2014).

Essentially, the sense of smell is very much like an embedding in the sense of current deep learning research. The fact that certain molecular receptors activate does not indicate a specific odor. Rather, an overall odor is detected as a pattern of several such receptors, whence the individual receptors are not so important. Furthermore, this mutliple activation pattern means that detecting when a new smell enters the room requires selective deactivation of previously active receptors, which is handled at the lower levels of the olfactory pathway.

From the olfactory bulb, which is the first stop in the neural processing of smell, olfaction passes to several structures, of which we will focus on the piriform cortex, which, unlike the neocortex, has three layers instead of six. In the piriform cortex, there is no topographical mapping of smell; every feature of smell can be perceived in every area. There are no neighbors; all the incoming olfactory data is available everywhere, quite distinct from the pattern with vision, hearing, and touch. From the piriform cortex, data passes on in various ways, especially to the frontal cortex, where it influences decision-making.

Olfactory output also impinges on the entorhinal cortex, which is neocortex, but this region is primarily concerned with navigation and places. Properly, this level of sensory input is less about understanding smell and more about identifying places with smell as one major input.

Other connections in the olfactory system completely bypass the neocortex, going to the amygdala or the hypothalamus. Some of these are involved in rewarding certain behaviors. For example, the olfactory system detects pheromones that can cause rewards for sexual behavior. Other pathways trigger reflexes including disgust or even vomiting (see the vomeronasal organ for details).

The point is that smell plays many roles in the brain, and not just a sensory role. The other point is that the sense of smell is bizarre from the perspective of the other senses. Nonetheless, for the sake of modeling sensory inputs, the output of the sense of smell is essentially a one-dimensional embedding. To fit a square peg in a round hole, I will model the sensory input from smell as a 3-tensor with shape (1,1,s), where s essentially represents the size of the smell embedding and hence the dimensions of smell available for later processing. I do not claim that these dimensions are interpretable in an explanatory sense, because they do not seem to be so. I will say that these dimensions should include information about the change in smell, which is detectable because as in the tactile system, certain nerve cells (the tufted cells) respond to changes more quickly than others (the mitral cells). I also assert that the embedding must encode some location information based on differentials between the paired nostrils.

One key aspect for why smell behaves differently from others is that whereas sight, hearing and touch are crucial for providing feedback during task performance, smell is less so. Rather, smell primarily serves three purposes from a cognitive point of view, namely, to recognize places, retrieve memories, and condition high-level decision making as opposed to direct motor control. And if O’Keefe (2003) is correct that episodic memory is essentially a human innovation built on the earlier place navigation infrastructure, then these three purposes further reduce to just two.

Taste

Briefly, in contrast to smell, taste is topographically organized in a gustatopic map (Chen et al., 2011). The five currently known tastes (sweet, bitter, salty, sour, savory / umami) each have their own regions in the insular cortex at the boundary of the temporal and frontal lobes (Prinster et al., 2017). The spatial layout of taste is not dimensional. Rather, if we represent smell by a 3-tensor with shape (d, e, h) then essentially each coordinate (x,y) with 0 < x < d and 0 < y < e would map into one of contiguous five taste regions, with each one possibly recapitulating the topography of the tongue and including temporal gradients in the features ranged over by h.

Image from Prinster et al., 2017, Figure 2

With that said, one of the primary roles of taste is to indicate rewards and punishments for the identification of an object as potential food. In this role, taste is more important as a learning signal than as a sensory input.

Proprioception and Balance

I will not belabor proprioception. Proprioceptive signals arrive at the brain up the spine through the dorsal-column medial lemniscus pathway alongside the sense of touch. Technically speaking, proprioception is a part of the sense of touch, and it is mapped into the primary somatosensory cortex (S1), although it does end up in a separate area of S1 (Brodmann area 3a as opposed to 3b, Cole et al., 2021). In that sense, we can assume that the representation (k,m,l) of touch has already handled proprioception.

But the main interest of proprioception is that it allows us to localize the output of other senses in egocentric space. As mentioned above, such localization depends critically on an area at the boundary of the parietal and temporal lobes, where the vestibular input for balance is also contingent. Using information from the state of the joints and the state of balance, it becomes possible to align the output of the ranged senses generally with the sense of touch, which is critical for task feedback.

Therefore, let us assume that proprioception produces its own input (k, m, r) that is aligned with the somatosensory input and represents the configuration of each body part, with r ranging over the features of proprioception, namely, the degree of compression along with directional gradients in this degree. Further, let us assume that the vestibular system provides an additional input with shape (1, 1, b) where b ranges over features including the current head orientation and its acceleration. These two sensory inputs, proprioception and balance, will be used to interpret spatial positioning of the stimuli perceived by other senses.

Summary and Conclusion

In this post, I have outlined the structure of the sensory system with an intent to model sensory inputs to the cortex. I have chosen to model these outputs as time-varying 3-tensors where the interpretations and size of the axes are specific to each sensory system. These senses are organized according to principles that vary somewhat according to sensory class but that include generally (1) a perceptual extent; (2) capability for localization; (3) detection of motion and change over time; (4) detection of qualitative features each associated with an intensity measurement; (5) lateralization or cross-lateralization of sensory percepts; and (6) topographic mapping of senses, where the topography is specific to the sense.

In the next post, I will look at the architecture of perception, how localization is extracted by aligning and cross-checking sensory inputs, and how basic attributes are generated to support high-level cognitive functions.

Thanks for reading! Let me know what you found interesting in the comments.

Another fun post! I like the treatment of sensory I/O as time-varying 3-tensors coming out of the physical structure of cortical tissue, and it's interesting to think of other brain regions as subcortical input senses of sorts. In all my consulting projects, I like to tell clients it's important to "start with the sensors" - indeed, they are the foundation of reality for whatever intelligence / objective function we are interested in cultivating.

I think this post also underscores the importance of the hardware in intelligent systems. Olfaction being a uniquely challenging one ... One of the founders of a well-funded AI startup in San Francisco once told me the most interesting AI application he'd seen was a group using olfactory tissue from dogs connected to a computer so they could build a machine that could smell with hypersensitivity.

Do you think the 3-tensor model would work in interfacing real neural tissue to electronic sensors?